Keep your data pipelines reliable and your data trusted with Cloudesign's DataOps services.

Transform your lifecycle with a high-performance DevOps platform engineered for speed, security, and scalability. Our DevOps consulting team implements CI/CD automation and Infrastructure as Code to reduce time-to-market while optimizing operational costs. By integrating DevSecOps and AI-driven AIOps, we ensure your infrastructure remains resilient and secure against modern technical threats.

DataOps Experts

Years of Experience

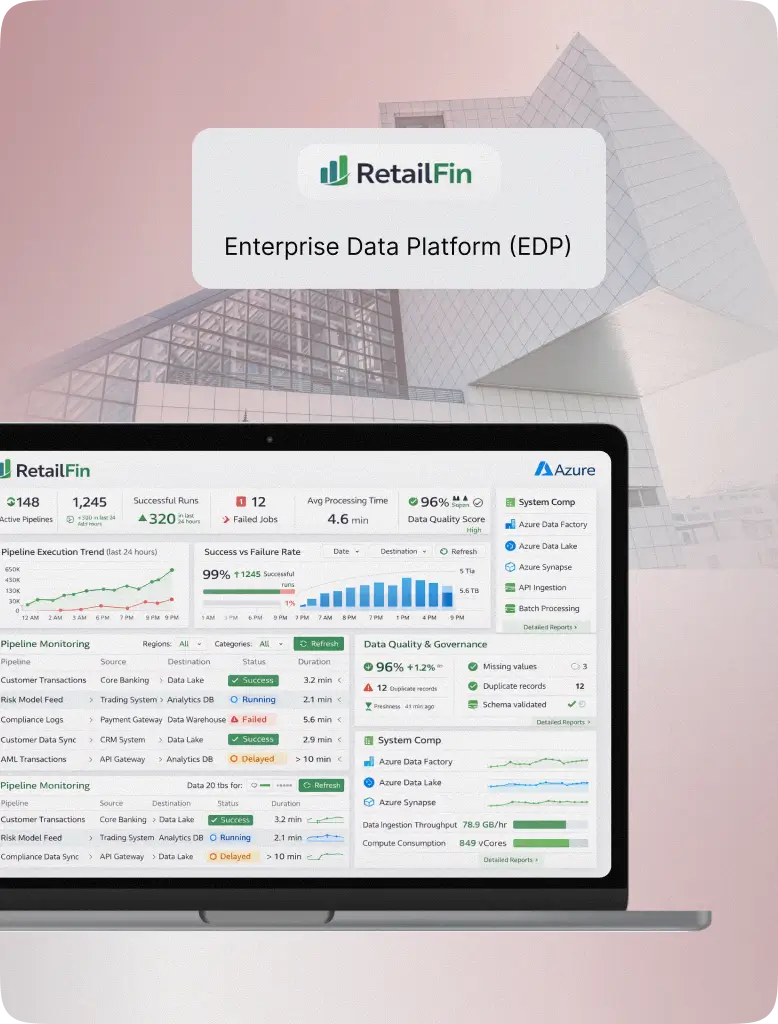

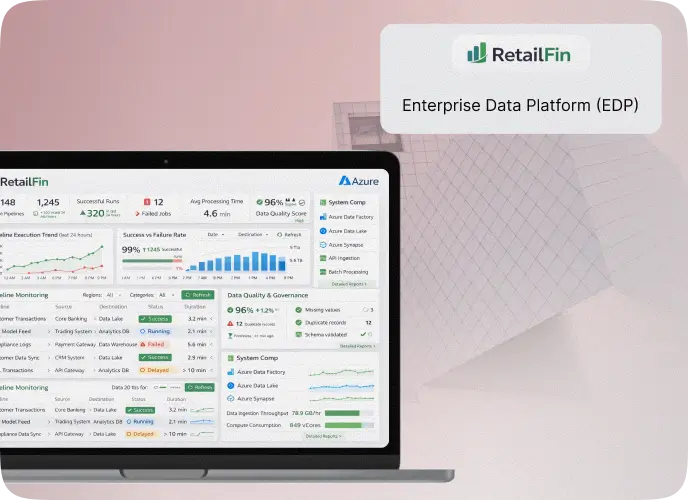

Case Study

A fintech organization operating on Azure, managing transactional, compliance, and customer data across multiple systems, supporting analytics, risk modeling, and regulatory reporting.

Modernize your data lifecycle with automated, scalable, and secure solutions.

Our DataOps consulting team partners with leadership to dismantle data silos and define a technical transformation roadmap. We evaluate your current maturity, address technical debt, and align data architecture with business KPIs to ensure long-term scalability.

Our DataOps consulting team partners with leadership to dismantle data silos and define a technical transformation roadmap. We evaluate your current maturity, address technical debt, and align data architecture with business KPIs to ensure long-term scalability.

Delivering scalable data excellence through strategic automation and engineering.

Access on-demand, certified experts to scale your technical capacity and accelerate project delivery through our flexible dataops as a service model.

Ensure "Gold Standard" accuracy across your organization by deploying automated inspection layers that verify data health at every stage of the pipeline.

Rapidly move from raw ingestion to actionable intelligence by utilizing DataOps automation tools to eliminate manual bottlenecks and development delays.

Maximize ROI with intelligent orchestration that dynamically scales your DataOps platform infrastructure in response to real-time workload demands

Implementing AI-DataOps through intelligent, self-evolving data pipeline ecosystems.

Partnering with industry leaders to deliver secure, AI-ready data solutions.

Receive regular project updates and maintain full ownership of all code and creative elements developed exclusively for your unique data ecosystem.

We use cutting-edge project management and agile development to ensure high-quality data solutions are delivered exactly when expected.

Whether you require custom-built pipelines or strategic optimization of existing ones, we align every development perfectly with your digital strategy.

Every line of code undergoes thorough testing and security audits to ensure we deliver robust, enterprise-grade solutions that protect your sensitive business data.

Our experts leverage the latest technologies to deliver user-friendly, scalable, and secure data products that drive measurable business results.

Choose from versatile models, including elite staff augmentation, to scale your technical capacity seamlessly as your data requirements evolve.

Standardize your infrastructure with modern DataOps tools to eliminate silos and accelerate decision-making.

We design complex directed acyclic graphs (DAGs) to manage cross-functional dependencies and automate workflow execution. We implement dynamic task generation and sensor-based triggers to ensure your pipelines respond instantly to data arrivals or external events. Our custom monitoring hooks provide granular visibility into task-level performance, allowing for rapid debugging and resource optimization across the entire orchestration layer.

Read our newest articles for the latest trends and browse our FAQ for everything you need to know.

Ready to discuss your next digital transformation project? Our experts are here to help you plan, design, and engineer solutions built for scale and performance.

Share your idea, and our team will schedule a discovery call to understand your goals and challenges.

Receive a tailored technology roadmap outlining architecture, tools, and timelines to bring your vision to life.

Once aligned, our engineers integrate seamlessly with your team to execute and accelerate delivery.

Send us an email at

sales@cloudesign.comTalk to Us

BDA Complex, 7th Cross, 16 B Main, B Block, Koramangala, Bengaluru, 560034

Ajmera Sikova, 606, Ghatkopar West, Mumbai, Maharashtra 400086

© 2025 Cloudesign Technology Pvt Ltd. All Rights Reserved